Hello and welcome to the introduction to Python Pandas Tutorial lecture and this lecture we’re going to briefly discuss what pandas are, how to get them installed onto your computer.

Python Pandas Introduction

- Python Pandas is an open-source library that’s built on top of NumPy.

- Pandas allow for fast analysis and data cleaning and preparation.

- It excels in performance and productivity.

- It also has built-in data visualization features.

- Pandas can also work with data from a wide variety of sources.

A lot of people like to call pandas pythons version of Excel. Python’s Pandas also has the data visualization feature, which we’ll learn about once he gets a python for data visualization.

For working with Python Pandas you’ll need to install Pandas by going to your command line or terminal and then use either anaconda install pandas.

Why we Use Pandas?

The name “Pandas” has a source to both “Panel Data”, and “Python Data Analysis” and which was created by Wes McKinney in 2008.

- Python Pandas allow us to analyze big data and make judgments based on statistical data or theory.

- It can clean unstructured messy datasets, and make them relevant and readable.

- Relevant, cleaned data is very crucial for data science.

Python Pandas Install

If you have the Anaconda distribution of Python then no need of installing Python pandas because it installed by default in your system, or if you installed Python through a different method like command prompt you can use the Pip install pandas method.

C:\Users\Your Name>pip install pandas

Importing Python Pandas Package

import pandas as pd

# here we are using pd as alias name of panda packageFirst code after importing Pandas as pd :

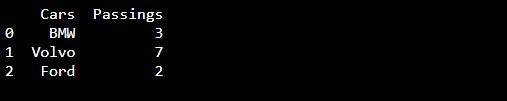

import pandas as pd

my_dataset = {

'Cars': ["BMW", "Volvo", "Ford"],

'Passing

': [3, 7, 2]

}

mycar = pd.DataFrame(my_dataset)

print(mycar)Output:

Cars Passing

0 BMW 3

1 Volvo 7

2 Ford 2

This is all about the introduction of Python Pandas, in this article we mentioned the installation and how to import the package.

FAQs:

Consider the dataframe ‘data’ and convert the datatype of the column is_canceled to int, float, object, and category, and use the nbytes attribute to calculate the total number of bytes allocated in each case. Which of the following datatype consumes the least memory (in bytes)?

a) int

b) float

c) object

d) category

Ans: d) category

How many columns have missing values in the data dataframe ?

a. 3

b. 5

c. 7

d. 9

Ans: a.3

What does the letter ‘i’ represent in the attribute pd.DataFrame.iat?

a. Index

b. Integer

c. The imaginary part of complex number

d. Include

Ans: b. Integer

All right, If you missed the Numpy tutorial of this blog then please read Numpy Tutorial for more understanding of the Python tutorial.

Thanks and I’ll see you at the next lecture.